When AI generates an sudden or incorrect end result, it typically can’t clarify the reasoning behind it — as a result of there’s none. AI doesn’t comply with a line of thought or ethical framework; it calculates possibilities. That’s why human evaluate stays important: Solely folks can decide whether or not an consequence is sensible, aligns with context or upholds equity and moral requirements. Strategic finance and compliance chief Tahir Jamal argues that true governance begins not when programs detect anomalies however when people determine what these anomalies imply — and understanding that distinction is crucial to making sure accountability.

AI has reworked how organizations detect threat and implement compliance. Dashboards flag anomalies in seconds, algorithms hint deviations with precision, and automation guarantees error-free oversight. But beneath this floor effectivity lies a deeper paradox: The extra we automate management, the better it turns into to lose sight of what governance really means.

Governance has by no means been about management alone. It has at all times been about conscience. AI can audit the numbers, but it surely can’t govern the intent.

Automation typically creates an phantasm of management. Actual-time dashboards and compliance indicators might undertaking confidence, however they will additionally obscure ethical duty. When choices seem to come back from programs slightly than folks, accountability turns into subtle. The language shifts from “I authorized it” to “the system processed it.” In conventional governance, choices had been related to names; in algorithmic programs, they’re related to logs.

As organizations rely extra on machine intelligence, the hazard grows that leaders will mistake information for judgment. When compliance turns into mechanical as a substitute of ethical, governance loses its that means. This phantasm of algorithmic authority varieties the place to begin for rethinking how people should stay on the heart of governance — not as bystanders however as interpreters of moral intent.

When information meets conscience

Throughout my tenure main monetary reforms below a US authorities–funded training undertaking in Somalia, we carried out a cellular wage verification system to eradicate “ghost” lecturers and guarantee clear funds. The automation labored: Each instructor’s fee may very well be verified. But a recurring dilemma revealed AI’s limits. Lecturers in distant areas typically shared SIM playing cards to assist colleagues withdraw salaries in no-network zones — a technical violation however a humanitarian necessity.

The information flagged it as fraud; solely human judgment acknowledged it as survival. This expertise uncovered the hole between compliance and conscience — between what’s technically appropriate and what’s ethically proper.

The identical dilemma exists in company contexts. Amazon’s now-retired AI hiring instrument mechanically favored résumés from males as a result of it realized patterns from biased historic information. The Apple Card controversy in 2019 revealed that girls acquired decrease credit score limits than males regardless of related monetary profiles. In each instances, algorithms had been constant — however constantly biased. These examples remind us that automation can amplify bias as simply as it might forestall it.

In company finance, healthcare or provide chains, AI can shortly spot what seems to be uncommon — a wierd fee, a questionable declare or a sudden value change. However recognizing a sample isn’t the identical as understanding it. Solely folks can inform whether or not it indicators fraud, urgency or a real want. As I typically remind my groups, “Machines can spotlight what’s totally different; people determine what’s proper.”

Past explainability: Constructing human-centered governance

“Explainable AI,” the concept that automated choices must be reviewable by people, has change into a preferred phrase in governance circles. But explainability is just not the identical as understanding, and transparency alone doesn’t assure ethics.

Most AI programs, particularly generative fashions that create outputs like studies or forecasts primarily based on realized patterns, function as black bins with inside logic that’s typically opaque even to their designers. They don’t motive or weigh choices as people do; they merely predict what appears probably primarily based on earlier information.

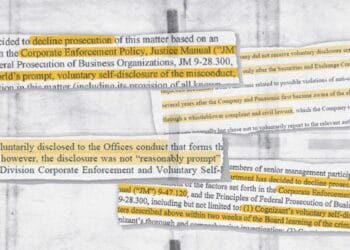

When an algorithm assigns a threat rating or flags a transaction, it performs sample recognition — figuring out tendencies, reminiscent of uncommon funds or behaviors — but it surely doesn’t perceive intent or consequence. So when a system produces an unfair or biased end result, it might present which elements influenced the choice, but it surely can’t clarify why that consequence is true or improper.

Explainability is what builds belief in AI-driven programs, not automation. True governance due to this fact calls for interpretation, not simply inspection. For compliance leaders, AI outputs ought to at all times be handled as advisory slightly than authoritative. Audit trails want human interpretability and accountability.

To make governance human by design, organizations should combine ethics into their system structure:

- Outline resolution rights: Each algorithmic suggestion ought to have a accountable human reviewer. Traceability restores possession.

- Require interpretability, not blind explainability: Leaders should perceive sufficient of the system’s logic to query it. A call that can’t be challenged shouldn’t be carried out.

- Set up moral oversight committees: Boards ought to evaluate mannequin habits — equity, inclusion and unintended impression — not simply efficiency.

- Preserve escalation pathways: Automated alerts should set off human judgment. Escalation retains moral intervention alive.

When expertise serves human conscience — slightly than changing it — governance turns into each clever and moral. The actual measure of AI maturity is just not predictive accuracy however ethical accountability.

Restoring integrity within the age of automation

As AI turns into embedded in each audit, workflow and management, the problem is not whether or not machines can govern effectively however whether or not people can nonetheless govern correctly. Governance is just not about managing information; it’s about guiding habits. Algorithms can optimize sure compliance capabilities, however they can not embody ethics.

To steer on this new period, organizations should domesticate leaders fluent in each code and conscience — professionals who perceive how expertise works and why ethics matter. Future compliance officers will want as a lot literacy in algorithmic logic as they’ve in monetary controls. They may function translators between machine precision and human precept, making certain that innovation by no means outruns accountability.

When AI generates an sudden or incorrect end result, it typically can’t clarify the reasoning behind it — as a result of there’s none. AI doesn’t comply with a line of thought or ethical framework; it calculates possibilities. That’s why human evaluate stays important: Solely folks can decide whether or not an consequence is sensible, aligns with context or upholds equity and moral requirements. Strategic finance and compliance chief Tahir Jamal argues that true governance begins not when programs detect anomalies however when people determine what these anomalies imply — and understanding that distinction is crucial to making sure accountability.

AI has reworked how organizations detect threat and implement compliance. Dashboards flag anomalies in seconds, algorithms hint deviations with precision, and automation guarantees error-free oversight. But beneath this floor effectivity lies a deeper paradox: The extra we automate management, the better it turns into to lose sight of what governance really means.

Governance has by no means been about management alone. It has at all times been about conscience. AI can audit the numbers, but it surely can’t govern the intent.

Automation typically creates an phantasm of management. Actual-time dashboards and compliance indicators might undertaking confidence, however they will additionally obscure ethical duty. When choices seem to come back from programs slightly than folks, accountability turns into subtle. The language shifts from “I authorized it” to “the system processed it.” In conventional governance, choices had been related to names; in algorithmic programs, they’re related to logs.

As organizations rely extra on machine intelligence, the hazard grows that leaders will mistake information for judgment. When compliance turns into mechanical as a substitute of ethical, governance loses its that means. This phantasm of algorithmic authority varieties the place to begin for rethinking how people should stay on the heart of governance — not as bystanders however as interpreters of moral intent.

When information meets conscience

Throughout my tenure main monetary reforms below a US authorities–funded training undertaking in Somalia, we carried out a cellular wage verification system to eradicate “ghost” lecturers and guarantee clear funds. The automation labored: Each instructor’s fee may very well be verified. But a recurring dilemma revealed AI’s limits. Lecturers in distant areas typically shared SIM playing cards to assist colleagues withdraw salaries in no-network zones — a technical violation however a humanitarian necessity.

The information flagged it as fraud; solely human judgment acknowledged it as survival. This expertise uncovered the hole between compliance and conscience — between what’s technically appropriate and what’s ethically proper.

The identical dilemma exists in company contexts. Amazon’s now-retired AI hiring instrument mechanically favored résumés from males as a result of it realized patterns from biased historic information. The Apple Card controversy in 2019 revealed that girls acquired decrease credit score limits than males regardless of related monetary profiles. In each instances, algorithms had been constant — however constantly biased. These examples remind us that automation can amplify bias as simply as it might forestall it.

In company finance, healthcare or provide chains, AI can shortly spot what seems to be uncommon — a wierd fee, a questionable declare or a sudden value change. However recognizing a sample isn’t the identical as understanding it. Solely folks can inform whether or not it indicators fraud, urgency or a real want. As I typically remind my groups, “Machines can spotlight what’s totally different; people determine what’s proper.”

Past explainability: Constructing human-centered governance

“Explainable AI,” the concept that automated choices must be reviewable by people, has change into a preferred phrase in governance circles. But explainability is just not the identical as understanding, and transparency alone doesn’t assure ethics.

Most AI programs, particularly generative fashions that create outputs like studies or forecasts primarily based on realized patterns, function as black bins with inside logic that’s typically opaque even to their designers. They don’t motive or weigh choices as people do; they merely predict what appears probably primarily based on earlier information.

When an algorithm assigns a threat rating or flags a transaction, it performs sample recognition — figuring out tendencies, reminiscent of uncommon funds or behaviors — but it surely doesn’t perceive intent or consequence. So when a system produces an unfair or biased end result, it might present which elements influenced the choice, but it surely can’t clarify why that consequence is true or improper.

Explainability is what builds belief in AI-driven programs, not automation. True governance due to this fact calls for interpretation, not simply inspection. For compliance leaders, AI outputs ought to at all times be handled as advisory slightly than authoritative. Audit trails want human interpretability and accountability.

To make governance human by design, organizations should combine ethics into their system structure:

- Outline resolution rights: Each algorithmic suggestion ought to have a accountable human reviewer. Traceability restores possession.

- Require interpretability, not blind explainability: Leaders should perceive sufficient of the system’s logic to query it. A call that can’t be challenged shouldn’t be carried out.

- Set up moral oversight committees: Boards ought to evaluate mannequin habits — equity, inclusion and unintended impression — not simply efficiency.

- Preserve escalation pathways: Automated alerts should set off human judgment. Escalation retains moral intervention alive.

When expertise serves human conscience — slightly than changing it — governance turns into each clever and moral. The actual measure of AI maturity is just not predictive accuracy however ethical accountability.

Restoring integrity within the age of automation

As AI turns into embedded in each audit, workflow and management, the problem is not whether or not machines can govern effectively however whether or not people can nonetheless govern correctly. Governance is just not about managing information; it’s about guiding habits. Algorithms can optimize sure compliance capabilities, however they can not embody ethics.

To steer on this new period, organizations should domesticate leaders fluent in each code and conscience — professionals who perceive how expertise works and why ethics matter. Future compliance officers will want as a lot literacy in algorithmic logic as they’ve in monetary controls. They may function translators between machine precision and human precept, making certain that innovation by no means outruns accountability.