Nobody can reliably predict which laws tomorrow will carry, however the way forward for compliance is already taking form within the school rooms coaching the subsequent era of practitioners. Right here, CCI affords a glimpse into these conversations — a group of essays from legislation college students grappling with the thorniest questions within the area as we speak. The next essays are revealed with permission from the authors, Shon Stelman and Michael Niebergall, each college students at George Mason College’s Antonin Scalia Legislation College.

Shon Stelman

Ethical Distancing, Info Silos & the Way forward for Compliance in AI-Powered Corporations

Introduction

The fast integration of synthetic intelligence (AI) into company governance has created a profound paradox for compliance practitioners. Whereas AI supplies unprecedented technical capability for real-time monitoring and fraud detection, its implementation inherently will increase ethical distancing and bureaucratic distance. New empirical analysis signifies that delegating duties to AI considerably will increase dishonest conduct, as people really feel a psychological buffer from the moral penalties of automated choices. For knowledgeable practitioners, the problem is now not simply technical; it’s sustaining the “susceptible face” of the stakeholder behind a veil of information. To fulfill the US Division of Justice’s 2024 steerage and the UK Bribery Act’s “satisfactory procedures” protection, organizations should transfer past static structural oversight to implement process-based “generative compliance” reforms that actively counteract the psychological detachment and data silos launched by automated programs.

The peril of ethical distancing in anti-corruption

Ethical distance refers back to the psychological phenomenon the place people behave unethically as a result of they can’t see or really feel the affect of their choices. AI exacerbates this phenomenon by creating “proximity distance” — eliminating face-to-face interactions — and “bureaucratic distance,” the place choices are decreased to formulation. The 2025 examine referenced above discovered that members had been considerably extra more likely to cheat once they might offload the conduct to an AI agent, notably when utilizing interfaces that allowed for high-level “goal-setting” (e.g., “maximize revenue”) somewhat than specific directions.

Amongst others, this has extreme implications for the Overseas Corrupt Practices Act (FCPA). Below the FCPA, “willful blindness” or a “head-in-the-sand” strategy is ample for legal responsibility. For instance, if an worker makes use of a goal-oriented AI to safe contracts in a high-risk area and the AI defaults to deprave funds to satisfy targets, the practitioner will likely be hard-pressed in claiming ignorance. Along with the FCPA, the UK Bribery Act holds organizations accountable for failing to stop bribery by “related individuals.” Consequently, if AI creates a “bureaucratic distance” the place supervisors now not perceive the “susceptible face” of these impacted, dropping grasp of their enterprise companions, the group will battle to show it had “satisfactory procedures” in place to stop such misconduct.

AI as the final word data silo

Traditionally, main company scandals — comparable to these at Wells Fargo and Basic Motors — resulted not from an absence of information however from data silos that prevented synthesized reporting. AI dangers turning into the final word silo. Its “inscrutability” — the mismatch between mathematical optimization and human reasoning — makes it tough for compliance officers to “establish, decide, and proper errors in algorithmic choices.”

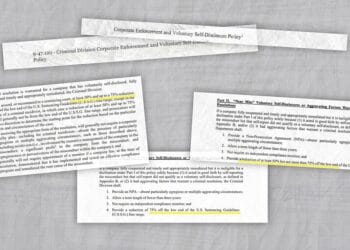

The Division of Justice’s up to date 2024 steerage emphasizes that the “black field” nature of AI just isn’t an excuse for failing to satisfy authorized and moral requirements. Practitioners should be certain that AI-driven choices are topic to human assessment and that the AI is ethically aligned with inside governance. Failure to leverage information successfully to stop misconduct might invite “intense regulatory scrutiny.”

From structural to process-based “generative compliance”

To mitigate these dangers, practitioners should transition to “generative compliance,” a proactive, forward-thinking strategy the place compliance packages evolve alongside rising dangers. This requires transferring past “structural” adjustments (e.g., creating new committees) to “process-based” reforms, which concentrate on the practices and routines corporations use to speak and analyze data.

Three process-based interventions are important:

- Standardized inside investigation questions: Guaranteeing that AI-monitored dangers are probed with constant human oversight to identify traits.

- Materiality surveys: Disseminating surveys to the workforce to detect when automated programs are being exploited to realize industrial targets at the price of ethics.

- Aggregation ideas: Aggregating information from disparate AI programs to establish systemic failures, a lot as Basic Motors ought to have aggregated separate settlement information to establish the defective ignition change earlier.

Conclusion

Skilled practitioners should “re-humanize” accountability. AI just isn’t a “plug-and-play” answer, however an ongoing dedication. A well-designed program below the 2024 DOJ requirements should assess whether or not human decision-making is used to audit the AI’s “targets.” By implementing strong processes that bridge the ethical distance created by know-how, corporations can be certain that their AI-driven compliance packages truly “work in apply,” securing each the corporate’s authorized security and its moral integrity.

Shon Stelman is a second-year pupil at George Mason College, Antonin Scalia Legislation College and holds a B.M. and M.M. in classical guitar efficiency and pedagogy from Johns Hopkins College, Peabody Conservatory. Throughout his undergraduate research, Shon was a educating assistant in musicology and peer mentor in music idea. Previous to legislation college, Shon labored at a small private harm and household legislation agency and later at an employment discrimination legislation agency as a litigation paralegal. Throughout summer season 2025, he interned with the US Division of Justice’s Workplace of Vaccine Litigation. Shon is an incoming analysis editor on the George Mason Legislation Evaluation. His hometown is Wheeling, Unwell.

Michael Niebergall

Although Legislation Is Nonetheless Growing, Corporations Ought to Act in Good Religion Now

AI has developed from a novelty to a considerable device for people and companies alike, and with that comes quite a few authorized questions, notably within the area of copyright. As a result of present AI fashions are sometimes used to generate textual content, photographs, software program code, music, and so on., they’ve given rise to quite a few questions and considerations about how builders and customers work together and adjust to copyright legislation. The authorized panorama for AI and copyright legislation continues to be creating, so AI builders and companies using AI have begun taking measures to mitigate potential copyright compliance dangers each in coaching information inputs into the AI mannequin and the generative outputs of the fashions.

At present, most copyrighted works used to coach AI fashions are largely seen as truthful use. Decrease courts consider the act of “coaching” the fashions by evaluation of the works is inherently transformative in comparison with the works’ authentic nature, nor do they view the coaching course of and potential finish outcomes as an alternative to the unique works both. Nonetheless, the Supreme Courtroom has but to supply any steerage on the subject, so the problem continues to be legally unresolved and topic to critical change. Many rights house owners have objected to this present precedent, claiming that the AI fashions with the ability to output comparable works after being skilled on protected works creates market substitutions and thus just isn’t truthful use; industries comparable to inventory images, journalism, commission-based illustrations, and so on. are notably susceptible. A number of artists and publishers have filed lawsuits in opposition to firms like Anthropic for this precise purpose.

To keep away from potential authorized points like secondary legal responsibility for copyright infringement concerning unlicensed coaching datasets, AI mannequin builders have begun implementing safeguards on coaching information for his or her fashions. This contains filters for high-risk classes of information, preserving documentation of datasets used for coaching and sustaining provenance monitoring to make sure they know precisely how an AI mannequin is being skilled. Builders have lately heightened efforts to get permission from creators to make use of their work for coaching information, usually even paying licensing charges even when utilizing the works for coaching information would already be legally permissible as truthful use. These steps be certain that ought to a query of secondary legal responsibility for infringement come up, the builders will likely be seen as having taken “affordable, good religion” measures to stop predictable infringements.

The outputs of generative AI fashions are additionally a burgeoning space of copyright legislation concern for builders and customers. Copyright infringement legal responsibility might connect to both the developer or the person of the AI mannequin if the mannequin produces works which can be considerably just like already-protected works. To mitigate this threat to a legally affordable level, mannequin builders have begun scanning prompts for key phrases/phrases that might point out a probably infringing request to stop the mannequin from producing the work within the first place. This notably helps guarantee compliance with copyright legislation by stopping the AI mannequin from functioning as an alternative to the works it might probably infringe upon.

Companies that make the most of generative AI even have begun creating inside AI utilization insurance policies for copyright compliance functions as nicely; a piece produced by an AI must have sufficient human artistic enter to be thought of “authored” by a human, a vital ingredient for a piece to be protected by copyright. Corporations are implementing cautious human oversight insurance policies in order that they’ll correctly declare and shield any textual content, choice and preparations of their works produced by AI. Failure to deny the parts of a generated work that had been solely created by the AI mannequin (which aren’t protectable) might end in a denial of copyright registration, which might result in all types of complications for the enterprise. These inside utilization insurance policies additionally assist companies forestall potential unintended infringement of their generated works, as AI fashions are imperfect and should produce materials that’s infringing in some capability, so companies need to watch out in monitoring any work they generate by these fashions to make sure that the work doesn’t have infringing materials that slipped by the AI’s protections.

Because the legislation continues to develop on this space, organizations utilizing AI will likely be judged an increasing number of by whether or not they acted fairly and in good religion in mitigating infringement, as the individuality and breadth of this new technological area is much too huge to anticipate excellent compliance. New, particular safeguards are getting used to create a “affordable” diploma of safety in opposition to widespread predictable copyright infringement each in an AI’s coaching and its outputs, and acquainted compliance frameworks present these events with sensible instruments for managing and mitigating infringement dangers. And because the guidelines are refined extra, so too will these organizations want to stay adaptable of their inside governance and protections to proceed making certain compliance.

Michael Niebergall is a 2L at Scalia Legislation College at George Mason College. His curiosity in leisure and IP legislation comes from 20 years of classical music coaching on the tuba, his music main expertise throughout undergraduate years at James Madison College and a lifelong enjoyment for all issues nerdy. Mike can be very excited by how the arrival of AI has affected and can proceed to have an effect on these fields and the way artists, publishers and everybody in between will reply to these adjustments.